|

12/27/2023 0 Comments Elasticsearch filebeat docker

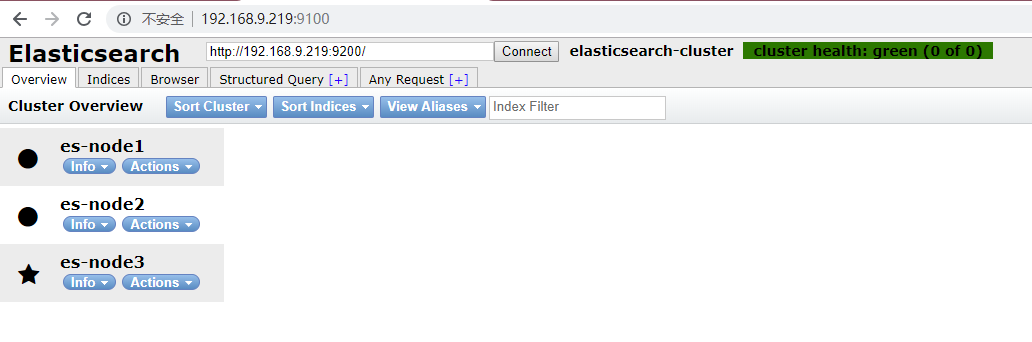

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME Root$ kubectl -n logging get pods -o wide This makes sure that our Filebeat DaemonSet schedules a pod on the master node as well. Once the Filebeat DaemonSet is deployed we can check if our pods get scheduled properly. Please note the following setting in the manifest:.In case you already have an Elasticsearch cluster running the env var should be set to point to it. We have set the env var ELASTICSEARCH_HOST to elasticsearch.elasticsearch to refer to the Elasticsearch client service which was created in part 1 of this article.We are mounting this directory from the host to the Filebeat pod and then Filebeat processes the logs according to the provided configuration. Logs for each pod are written to /var/log/docker/containers.# data folder stores a registry of read status for all files, so we don't send everything again on a Filebeat pod restart MountPath: /usr/share/filebeat/filebeat.yml # If using Red Hat OpenShift uncomment this: "-c", "/usr/share/filebeat/filebeat.yml", Use the manifest below to deploy the Filebeat DaemonSet. This is helpful when we try to filter logs specific to a particular worker node. Cloud metadata processor includes some host specific fields in the log entry.Alternatively, this can also point to Redis, Logstash, Kafka or even a File. The output is set to Elasticsearch because we are using Elasticsearch as the storage backend.We can also use different multiline patterns for different namespaces. We can also filter logs for a particular namespace and then can process the log entries accordingly.These labels can be later used to filter logs in the Kibana console. include_labels: Setting this to true enables Filebeat to retain any pod labels for a particular log entry.These annotations can be later used to filter logs in the Kibana console. include_annotations: Setting this to true enables Filebeat to retain any pod annotation for a particular log entry.We can specify different multiline patterns and various other types of config. By using this we can use pod annotations to pass config directly to Filebeat pod. hints.enabled: This activates Filebeat’s hints module for Kubernetes.Important concepts for the Filebeat ConfigMap: Kubernetes.namespace: myapp #Set the namespace in which your app is running, can add multiple conditions in case of more than 1 namespace. Use the following manifest to create a ConfigMap which will be used by Filebeat pods. If either of the pods associated with this service account gets compromised then the attacker would not be able to gain access to the entire cluster or applications running in it. We should make sure that ClusterRole permissions are as limited as possible from the security point of view. apiGroups: # "" indicates the core API group Client Node pods will forward workload related logs for application observability.Ĭreating Filebeat ServiceAccount and ClusterRoleĭeploy the following manifest to create the required permissions for Filebeat pods.ĪpiVersion: /v1beta1.Master Node pods will forward api-server logs for audit and cluster administration purposes.Pods will be scheduled on both Master nodes and Worker Nodes.Deployed in a separate namespace called Logging.The first post runs through the deployment architecture for the nodes and deploying Kibana and ES-HQ.įilebeat will run as a DaemonSet in our Kubernetes cluster. It is best for production level setups. This blog post is the second in a two-part series. We are using Filebeat instead of FluentD or FluentBit because it is an extremely lightweight utility and has a first class support for Kubernetes. In this tutorial we will learn about configuring Filebeat to run as a DaemonSet in our Kubernetes cluster in order to ship logs to the Elasticsearch backend. Creating Filebeat ServiceAccount and ClusterRole.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed